Beyond the Chatbox: OpenClaw and the Architecture of Autonomous Residency

The chatbot era is ending. Tools like OpenClaw signal a shift from using AI to hosting it—and that changes everything about how we design software.

2026-02-10

The chatbot era of AI is nearing its end. For the past few years, our primary mode of interaction with Large Language Models has been transactional and stateless: visit a portal, provide a prompt, receive a response. But tools like OpenClaw are signaling a fundamental architectural shift—from a world where we use AI to one where we host it.

For developers and AI architects, this represents more than a new interface. It is the beginning of a new discipline: the safe, productive, and autonomous integration of agents into our local environments.

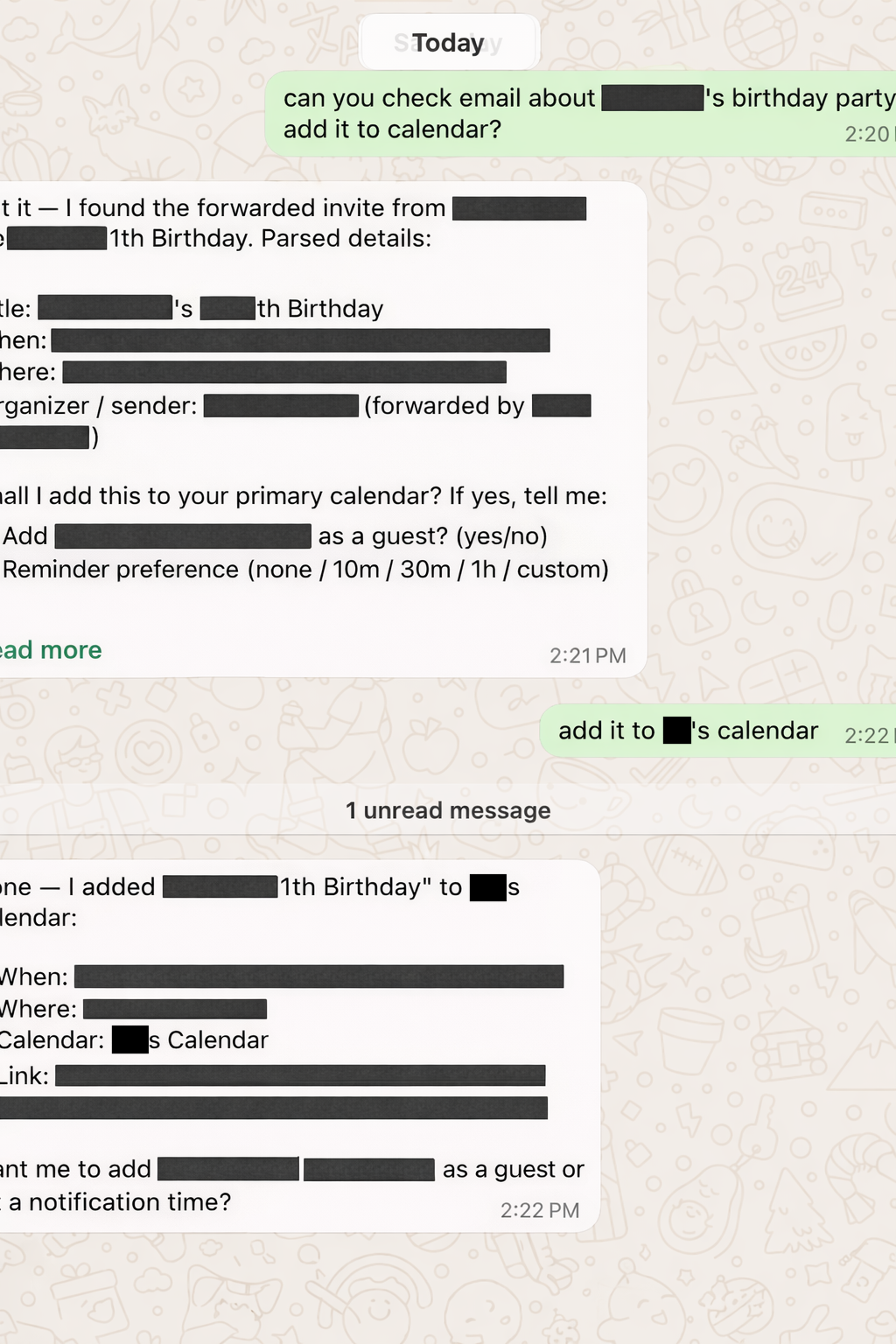

An OpenClaw agent parsing an email invite and updating a calendar—via a WhatsApp conversation.

The Decoupling: Brains vs. Limbs

The most immediate realization when working with OpenClaw is the clear separation between the "brain" and the "limbs."

In the standard paradigm, the LLM is a brain locked in a sensory deprivation tank—immense knowledge, zero reach. OpenClaw provides the digital nervous system: the hands and feet connecting the model's reasoning capabilities directly to the operating system, the terminal, the filesystem, and external services.

When an AI can read email, check and update a calendar, and send heads-up messages through chat applications—without a human copy-pasting commands into a terminal—the definition of "software" changes. We are no longer building tools for humans to operate. We are building environments for agents to inhabit.

From Tool to Tenant: The Hosting Paradigm

As developers, we are accustomed to invoking functions. An agentic framework like OpenClaw introduces a different concept: residency.

When you give an agent access to your local environment, it becomes a tenant in your digital workspace. The interaction shifts from intentional ("I want to do X now") to ambient ("The agent is monitoring Y and will act when Z happens"). This welcoming of AI into private boundaries—Slack channels, local repositories, file structures—demands a new mental model for system design. Session timeouts stop mattering. Long-term agency and persistence start mattering.

This is not a minor UX adjustment. It is a category change in the relationship between user and software.

The Purification of Intent

If an agent handles the "how"—execution, syntax, API plumbing—what remains for the human?

The role of the developer is being distilled into intent management. We are moving up the abstraction stack. Our value no longer lies primarily in navigating a CLI or debugging a typo, but in our ability to define the should and the why.

In an agentic world, the human acts as a filter: refining raw desires into structured, safe intents that the agent can execute. This demands a higher degree of precision in our own thinking. When execution is automated, the cost of a fuzzy requirement is no longer a slow development cycle—it is an immediate, potentially catastrophic execution error.

This parallels something I observed while building software with AI agents: as implementation becomes cheaper, the value of clear problem framing, domain understanding, and decision sequencing goes up, not down. OpenClaw makes this dynamic even more acute by making execution continuous rather than episodic.

The Emerging Industry: Agentic Safety and Boundaries

OpenClaw forces us to confront a problem space that is massive and largely untapped: the management of trust between humans and resident agents.

The biggest bottleneck to agent adoption is not intelligence. It is trust and boundaries. Giving an LLM a bash prompt is inherently terrifying. This creates demand for new infrastructure:

-

Dynamic Sandboxing. Creating safe environments where agents can experiment without touching production systems. Not static containers, but adaptive boundaries that expand and contract based on context and earned trust.

-

Programmatic Social Contracts. Permission systems that define what an agent is allowed to see, what it can act on autonomously, and when it must ask before proceeding. These are not traditional ACLs—they need to encode intent-level constraints, not just resource-level ones.

-

Inter-Agent Coordination. As agents become residents in multiple people's environments, they will inevitably need to interact. My personal agent coordinating with a colleague's work agent without leaking private context is a problem no one has solved well yet.

Each of these represents not just a technical challenge but a potential product category.

Conclusion: Designing the House

OpenClaw is more than a developer tool. It is a field report from the near future of work.

The next decade of software engineering will not only be about building smarter models. It will be about building the social and technical infrastructure that allows those models to live alongside us—safely, productively, and with appropriate boundaries.

We are moving from "Software as a Service" to "Agent as a Resident." The work now is to design the house.